While the industry chases the next AI benchmark and the next model announcement, something genuinely important happened on April 12, 2026, and it arrived with almost no fanfare: Linus Torvalds released Linux kernel 7.0. The announcement was accompanied by a typically deadpan Torvalds joke — he bumped the major version, he said, because 6.19 was getting difficult to count on his fingers and toes. No architectural break. No marketing slide. A merge window, seven release candidates, a tarball on kernel.org, a signature to verify.

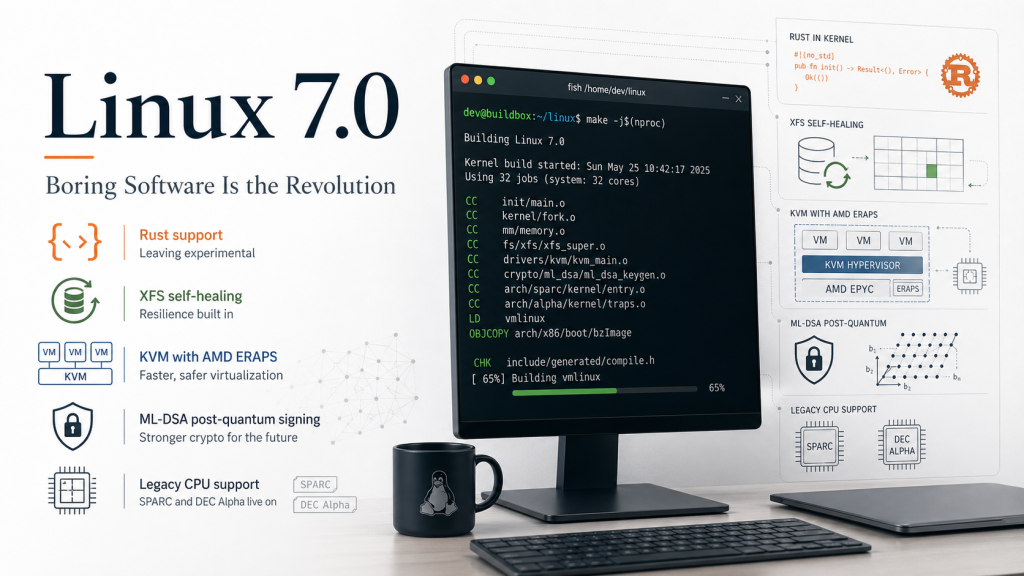

This is what real infrastructure looks like when it works. It’s patient. It’s incremental. It doesn’t ask you to be excited. And if you’ve been paying attention to the right signals, Linux 7.0 is one of the most consequential kernel releases in years — not because any single feature is revolutionary, but because five long-running engineering efforts reach maturity in the same cycle. Rust graduates from experimental. XFS becomes self-healing. KVM virtualizes AMD’s ERAPS. Module signing gets post-quantum cryptography. And, as a quiet reminder of what “long-term support” really means, the kernel continues to ship patches for SPARC and DEC Alpha.

This post tells each of those stories. Not as a feature list, but as evidence of what it takes to build infrastructure you can actually trust over decades.

Index

- The Memory Safety Story: Rust, No Longer Experimental

- The Filesystem Story: XFS Learns to Heal Itself

- The Virtualization Story: AMD ERAPS Meets KVM

- The Post-Quantum Story: ML-DSA Arrives in the Kernel

- The Heritage Story: SPARC and Alpha Still Alive

- What This Release Actually Means

The Memory Safety Story: Rust, No Longer Experimental

For four decades, the Linux kernel has been written in C. This is not a detail — it is the defining technical constraint of the project. C delivers extraordinary performance and complete control over the machine, and in return it demands that every developer be personally responsible for memory safety. Use-after-free, null pointer dereferences, buffer overflows, data races: entire categories of vulnerabilities exist because C cannot prevent them at compile time.

The cost of this bargain is not theoretical. A substantial fraction of every kernel CVE in the past decade traces back to memory safety errors that the compiler, structurally, could not catch. Google’s Android security team has been publishing on this for years. Microsoft’s security response team has said the same thing about Windows.

The Rust for Linux project has been arguing since 2020 that there is a way out: a language with C’s performance and control but with memory safety enforced by the compiler. The project moved cautiously — experimentally — through kernel cycles. Drivers were written. The Android Binder driver, rewritten in Rust, shipped on hundreds of millions of Android devices. The build infrastructure matured.

At the 2025 Linux Maintainer Summit in Tokyo, the kernel maintainers made the decision formal. Linux 7.0 is the release where the [EXPERIMENTAL] warning comes off.

What this means practically:

- Rust is now a first-class language in the kernel tree alongside C

- New driver submissions in Rust are reviewed to the same standard as C code

- Distribution toolchains (Fedora 44, Ubuntu 26.04 LTS) now ship Rust build support enabled by default

- The NVMe subsystem has active Rust rewrite proposals — watch this space in 7.1 and 7.2

If you want to build a 7.0 kernel with Rust support enabled, the toolchain check is your first stop:

cd linux-7.0

make rustavailable

# Expected output: Rust is available!

scripts/config --enable CONFIG_RUST

make olddefconfig

make -j$(nproc)If make rustavailable fails, the script at scripts/min-tool-version.sh will tell you the minimum rustc version required. Most distributions now package a compatible toolchain, but if you’re building on an older LTS you may need rustup.

The kernel will not be rewritten in Rust. C remains the lingua franca, and anyone predicting otherwise misunderstands the project. What Rust’s stabilization actually does is give maintainers a tool they didn’t have before: the option to write new, security-critical, memory-dangerous code in a language that eliminates an entire class of vulnerabilities at compile time. This is one of those slow, patient infrastructure changes that will shape the security properties of Linux systems for the next twenty years.

The Filesystem Story: XFS Learns to Heal Itself

If you operate Linux at any scale, you probably run XFS. It is the default filesystem on RHEL, Rocky Linux, AlmaLinux, and most server-oriented distributions. It was designed in 1994 at Silicon Graphics for workloads that punished lesser filesystems — scientific computing, video editing, high-throughput storage. It has been the dependable choice for enterprise Linux for a generation.

And until Linux 7.0, it had a brittle failure mode. When XFS detected metadata corruption — typically caused by a power failure, a bad sector, or a controller fault — its response was deterministic and operationally painful:

[ 183.442610] XFS (sda1): Metadata corruption detected at xfs_inode_buf_ops

[ 183.442618] XFS (sda1): Unmount and run xfs_repairTranslate that message into operational reality: take the volume offline, boot from rescue media, run xfs_repair, pray that the damage is confined to metadata and not user data, and explain to stakeholders why the system was down. On a live root filesystem, the unmounting requirement is a nightmare. On a container host with dozens of running services, it’s a cascade failure waiting to propagate.

Linux 7.0 introduces autonomous self-healing. The design principle is elegant: XFS already maintains redundant copies of critical metadata — inode btrees, allocation group headers, directory blocks. The new healing logic detects corruption at read time, cross-references the redundant copies, reconstructs the correct data in place, and continues operation.

[ 183.442610] XFS (sda1): Repairing corrupt inode btree in AG 2.

[ 183.442618] XFS (sda1): Self-healing complete. Filesystem operational.This is not a replacement for RAID. It is not a replacement for backups. It is not protection against physical data loss that exceeds what XFS internally duplicates. It is protection against the single most common class of real-world metadata corruption events — the ones that have historically required human intervention and caused production outages. On a 7.0 kernel with a standard XFS-formatted volume, this activates automatically. No configuration change. No opt-in. Mount the volume, use it, and when something goes wrong at the metadata layer the filesystem quietly fixes itself and logs what it did.

Operational monitoring is straightforward:

# Watch self-healing events in real time

watch -n 2 'dmesg | grep -i "xfs.*heal\|xfs.*repair"'

# Full filesystem integrity check without unmounting

xfs_info /dev/sda1

xfs_db -r -c "check" /dev/sda1If you manage a fleet of XFS-backed servers, adding xfs.*heal patterns to your centralized log aggregation (Loki, Elastic, Splunk, whatever) is fifteen minutes of work and gives you visibility into a whole category of events you previously could only detect after they had caused an outage.

The Virtualization Story: AMD ERAPS Meets KVM

This is the feature with the most nuance, and the one most frequently described imprecisely. It deserves careful explanation.

ERAPS stands for Enhanced Return Address Predictor Security. It is a feature introduced on AMD Zen 5 processors — the 5th generation EPYC, codename “Turin.” To understand why it matters, we need to briefly revisit the speculative execution attacks of the past eight years.

In 2018, the SpectreRSB vulnerability demonstrated that the Return Stack Buffer (RSB) — the hardware structure that predicts the target of RET instructions during speculative execution — could be poisoned by an attacker to redirect speculation to attacker-controlled addresses. This could leak kernel memory to userspace, or host memory to guest VMs, through side-channel timing analysis.

The software mitigation that followed was RSB stuffing: on every context switch and every VMEXIT, the kernel executes 32 CALL instructions to overwrite the RSB with safe targets. This works. It also has a real, measurable performance cost — particularly in virtualization-heavy workloads, where VMEXITs are frequent. Eight years later, every Linux system running on AMD or Intel silicon is still paying this performance tax.

ERAPS is the hardware answer. On Zen 5, two things change:

- TLB flushes automatically trigger RSB flushes, eliminating the need for explicit software-driven RSB stuffing at the most common stuffing-trigger points.

- The RSB is expanded to 64 entries in VM contexts (previous AMD generations had 32).

What Linux 7.0 does, specifically, is add the initial support in KVM’s AMD SVM code to virtualize ERAPS for guest VMs. When ERAPS is exposed to the guest:

- The guest can reduce or eliminate its own RSB stuffing on context switches

- The guest benefits from the hardware’s automatic RSB flush on CR3 writes

- The guest can safely raise its RSB stuffing loop count from the hardcoded 32 to match the actual 64-entry hardware RSB

This is the important distinction that sometimes gets lost: ERAPS is not merely a security feature, it’s a performance recovery feature. It reduces the software mitigation tax that Spectre imposed. For EPYC Turin virtualization hosts running latency-sensitive guest workloads — databases, busy JVMs, microservices with high context-switch rates — this will be measurable.

One important caveat: ERAPS virtualization in 7.0 requires NPT (Nested Page Tables) to be enabled in KVM. This is the standard configuration for any production KVM deployment, so in practice it’s a non-issue. Shadow paging configurations do not benefit from the automatic RSB flush on guest context switches and would require additional hypervisor-side mitigation.

Verifying ERAPS after booting 7.0 on Zen 5 hardware:

# On the host: confirm ERAPS is enumerated by the CPU

grep -m1 "eraps" /proc/cpuinfo

# Check that KVM exposes ERAPS to guests

cat /sys/module/kvm_amd/parameters/eraps

# In a running guest: verify the larger RSB is accessible

dmesg | grep -i "rsb\|eraps\|return stack"A final note on terminology. You will see ERAPS described loosely as “RSB or return-address-predictor protection.” This is directionally correct but incomplete. ERAPS is not only protection. It is the recovery of virtualization performance that was lost to SpectreRSB mitigations — delivered as a hardware feature on Zen 5, now accessible to guest VMs on Linux 7.0. If you are sizing a new EPYC virtualization fleet, the performance implication is the headline, not the security flag.

The Post-Quantum Story: ML-DSA Arrives in the Kernel

The post-quantum cryptography transition is one of the most significant long-term changes happening to the global software supply chain, and almost nobody outside of cryptography circles is paying adequate attention.

The situation in brief. RSA and ECDSA, the classical signature algorithms that secure essentially all current code signing, TLS certificates, SSH host authentication, and kernel module signing, are vulnerable to Shor’s algorithm — an efficient quantum algorithm for factoring integers and computing discrete logarithms. A sufficiently large quantum computer running Shor’s algorithm can forge RSA and ECDSA signatures. No such computer exists today. Credible ones may exist in ten to fifteen years. Migrating a global cryptographic infrastructure takes ten to fifteen years. The math is tight.

NIST’s response has been the post-quantum cryptography standardization process, which in August 2024 finalized three standards: ML-KEM (key encapsulation), ML-DSA (digital signatures), and SLH-DSA (stateless hash-based signatures). ML-DSA is based on Module Learning With Errors (MLWE) — a lattice problem for which no efficient quantum algorithm is known.

Linux 7.0 adds support for ML-DSA as a valid signature scheme for kernel module authentication. And — just as importantly — it removes support for SHA-1-based module signing. SHA-1 has been practically broken for years; its removal from the module signing pipeline is long overdue.

For infrastructure teams operating locked-down secure-boot environments with custom kernel module signing pipelines, this is the most operationally significant security change in 7.0. If you maintain a signing pipeline, you need to evaluate your toolchain’s ML-DSA readiness and plan the migration before SHA-1-signed modules start failing to load.

The practical workflow, assuming OpenSSL 3.3 or later with PQC support:

# Generate an ML-DSA signing key pair

openssl genpkey -algorithm mldsa65 -out signing_key.pem

openssl req -new -x509 -key signing_key.pem -out signing_cert.pem \

-days 3650 -subj "/CN=My Kernel Module Signing Key/"

# Sign a kernel module

/usr/lib/linux-kbuild-7.0/scripts/sign-file \

mldsa65 signing_key.pem signing_cert.pem my_module.ko

# Verify the signature is attached

modinfo my_module.ko | grep -i sigConfiguring a 7.0 kernel build to use ML-DSA by default:

scripts/config --enable CONFIG_MODULE_SIG

scripts/config --enable CONFIG_MODULE_SIG_ALL

scripts/config --set-str CONFIG_MODULE_SIG_HASH "mldsa65"

scripts/config --disable CONFIG_MODULE_SIG_SHA1This is an early, narrow adoption of post-quantum cryptography in a critical piece of infrastructure. TLS will follow. SSH host keys will follow. Web PKI will follow. Each of these migrations will take years. The kernel module signing migration in Linux 7.0 is a small, concrete proof point that the tooling is ready — and that anyone waiting to start their own migration is already behind schedule.

The Heritage Story: SPARC and Alpha Still Alive

Tucked into the 7.0 merge window, Phoronix noticed something that reads almost like a geological entry: patches for Sun/Oracle SPARC and DEC Alpha processors. In the same release cycle that stabilizes Rust and introduces post-quantum cryptography, the Linux kernel also fixed a user-space corruption bug during memory compaction on Alpha and added clone3 syscall support to SPARC.

SPARC is a RISC architecture originally developed by Sun Microsystems and inherited by Oracle in 2010. DEC Alpha was Digital Equipment Corporation’s remarkable 64-bit RISC architecture from the 1990s — genuinely ahead of its time, discontinued in 2001 when Compaq shut down the line. Both architectures are decades past their mainstream relevance.

And yet the Linux kernel continues to support them. Not perfunctorily. Actively. Patches reviewed and merged within the standard maintenance process, by people who care enough to do the work.

This is worth pausing on, because it illustrates something that is easy to miss in the usual feature-count coverage of kernel releases. The Linux kernel’s commitment to architectural breadth is not sentimentality. It reflects three realities that matter to anyone who thinks seriously about infrastructure:

Legacy systems exist in production. SPARC hardware still runs specific financial and governmental workloads where replacement cycles are measured in decades, not quarters. Without continued kernel support, these environments face a choice between unsupported software stacks and catastrophically expensive hardware migrations. The kernel keeping SPARC alive is the difference between “maintainable” and “stranded.”

Architectural diversity is a forcing function for good code. Code that must compile and run correctly on SPARC, Alpha, x86_64, ARM64, RISC-V, and LoongArch simultaneously is structurally forced to respect architecture-neutral abstractions. It cannot casually assume x86 memory ordering, x86 page sizes, or x86 anything. This makes the codebase more portable and more honest than it would be if it optimized exclusively for the dominant architecture of the moment.

Hardware preservation is real work. DEC Alpha systems are actively used by computer-architecture research groups and hardware-preservation communities. A working Linux port is the difference between a research platform and a museum piece.

None of this is exciting in a headline sense. All of it is the kind of patient, quiet, uncelebrated maintenance that keeps the broader ecosystem trustworthy.

What This Release Actually Means

There is a particular temptation, when writing about Linux kernel releases, to treat them as product launches. Feature lists. Comparison tables. Marketing-adjacent narratives about “what’s new” and “why you should upgrade.”

This is the wrong frame. Linux kernel releases are not products. They are dispatches from a forty-year engineering project that millions of us depend on, most of the time without thinking about it. The measure of a kernel release is not how exciting it is. The measure is whether it keeps the system trustworthy for the next twenty years.

By that measure, Linux 7.0 is a remarkable release. Rust’s graduation means that memory-unsafe driver categories now have a credible alternative path. XFS self-healing means that the most common enterprise Linux filesystem has a meaningfully better failure mode. ERAPS virtualization means that the hardware industry has finally started paying back the performance tax that speculative-execution mitigations imposed. ML-DSA signatures mean that the kernel module signing pipeline is among the first critical pieces of infrastructure to begin the post-quantum migration — quietly, correctly, ahead of schedule. And the continued maintenance of SPARC and Alpha is a quiet reminder of what the project’s commitments actually are.

None of this will appear in a marketing deck. All of this will shape what infrastructure looks like in 2040.

For anyone running a kernel-forward distribution, the upgrade path is easy: Ubuntu 26.04 LTS “Resolute Raccoon” and Fedora 44 both ship 7.0 as their default kernel. For everyone else — if you have reason to build your own, or to test specific configurations before distribution packages land — the source is at kernel.org, the GPG signatures are published, and a full build from a clean checkout on a modern workstation takes roughly ten minutes:

wget https://cdn.kernel.org/pub/linux/kernel/v7.x/linux-7.0.tar.xz

wget https://cdn.kernel.org/pub/linux/kernel/v7.x/linux-7.0.tar.sign

unxz linux-7.0.tar.xz

gpg --verify linux-7.0.tar.sign linux-7.0.tar # always verify signatures

tar -xf linux-7.0.tar && cd linux-7.0

cp /boot/config-$(uname -r) .config # start from known-good

make olddefconfig

time make -j$(nproc) LOCALVERSION="-custom"

sudo make modules_install

sudo make install

sudo update-grub # or grub2-mkconfig on RHELThe open-source model continues to deliver what no proprietary alternative can: transparency, auditability, architectural breadth, and the patient maturation of engineering investments over multi-year timescales. Linux 7.0 is not a revolution. It is the slow, persistent, boring revolution — the only kind that actually lasts.